MOSIP delves into biometric data quality considerations

Biometric data quality was in focus at MOSIP Connect 2026 in Rabat, Morocco, from policies for ensuring good enrollment practices to tools for assessment.

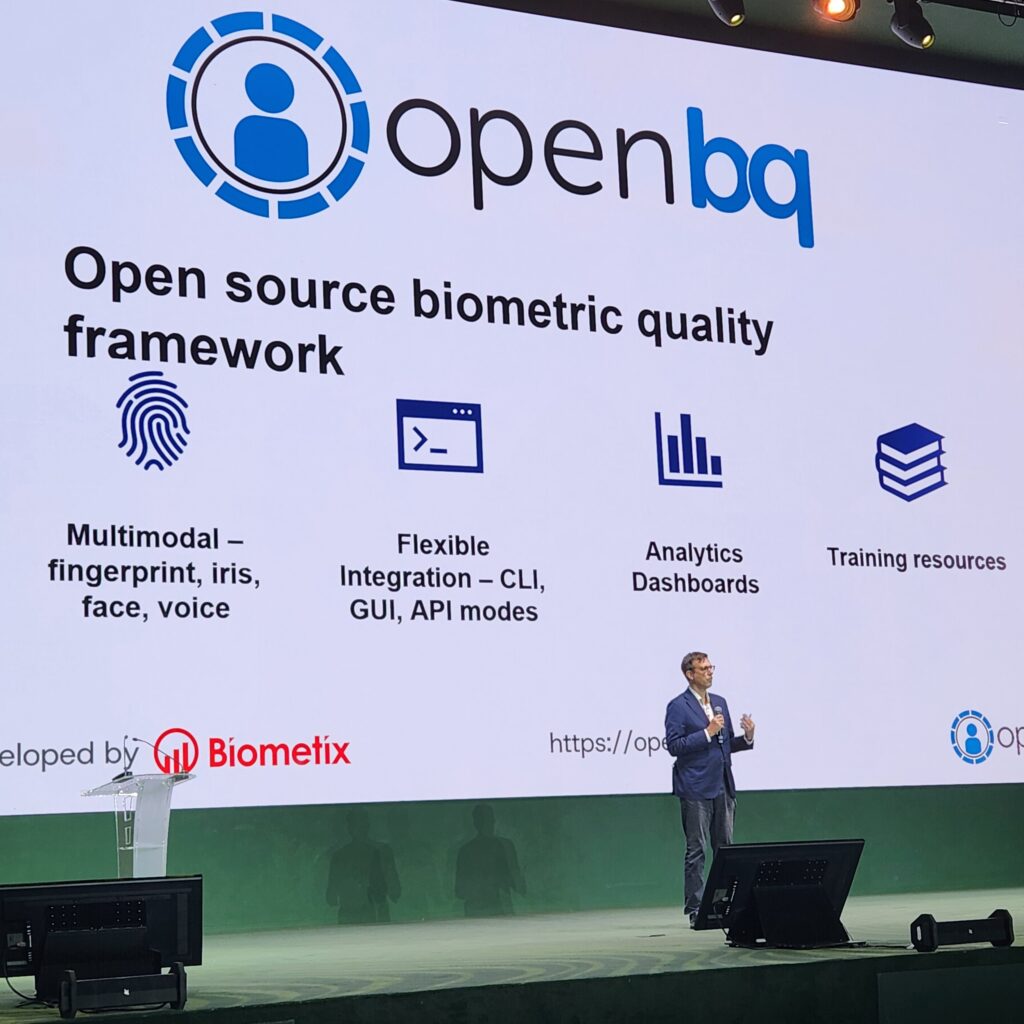

Among significant developments at the even was the launch of openbq, the open-source MOSIP implementation of the BQAT biometric data quality investigation tool developed by Biometix.

Brownfield implementations of MOSIP, which were another theme of the event, require the migration of biometric data. But biometrics migration can degrade data quality if not managed carefully. Uganda, which recently completed the first migration of a legacy biometric database to MOSIP’s SBI, did not experience this problem, which NIRA ED Rosemary Kisembo attributed in part to the effectiveness of the software previously provided by Neurotechnology.

But Kisembo experienced the problem of face biometrics capture performing worse for people with dark skin herself, and also notes Ugandans with lighter skin were told the light was too bright. Understanding how to handle exceptional faces in the ABIS took time, she said during a day 1 keynote.

Ethiopia’s NIDP was faced with a dilemma when poor fingerprint quality led to matches against other people’s biometrics, as explained during a day 2 presentation.

“Bad quality will always match with bad quality,” Biometix and BixeLab CEO Ted Dunstone noted during the presentation.

If Ethiopia’s biometric enrollment device operators were allowed to make exceptions for people enrolling, the system would end up with too many people registered without the main system’s main biometric. In cases where people did not have fingerprints to collect, such as those without fingers, manual exceptions were allowed, but low-quality fingerprints were assessed post-capture to take the decision away from enrollment operators, and allow for biometric data quality to be balanced with inclusion.

Dunstone made the case for dedicated biometric data quality teams to be responsible for the characteristic, and empowered to take action.

He also noted that many people find biometrics capture difficult and stressful, making it important to avoid repeated attempts, let alone re-enrollment. One way to do that is to identify data quality issues during the pilot stage.

Another way is by using an enrollment device certified under the MACP (MOSIP Advanced Compliance Program), for which BixeLab is the first accredited testing body.

MOSIP Biometric Ecosystem Head Sanjith Sundaram reviewed the program, which has so far certified biometric devices from IriTech and Thales.

openbq’s implications

During the seminar, Dunstone described openbq as an “aggregator of quality algorithms,” which is designed more to produce actionable feedback than as an assessment of data on the way into the system.

“In large-scale digital identity programmes, biometric quality is a primary determinant of enrolment success, downstream matching accuracy, and long-term system sustainability,” Dunstone told Biometric Update in emailed comments on the launch. “Within MOSIP implementations, openbq introduces objective, standards-aligned quality analytics that enable programme owners to detect systemic capture deficiencies early. By reducing avoidable enrolment failures and improving consistency across devices and environments, openbq strengthens inclusion outcomes, particularly for diverse and hard-to-enrol populations.”

Biometric quality is not just a technical parameter, but a governance control, Dunstone says. Assessing it at the time of capture can help avoid costly re-enrollment and manual reviews.

“openbq provides independent, standards-based quality measurement, enabling governments to monitor trends, benchmark vendor performance, and exercise evidence-based oversight at national scale.”

Quality assessment provides evidence to inform policy, including for training, and procurement, and enables early identification of risks so timely corrective action can be taken.

“The result is fewer avoidable enrolment failures, stronger accountability, improved inclusion, and greater public confidence at national scale,” says Dunstone.

Article Topics

biometric data quality | biometric matching | biometrics | BixeLab | digital identity | MOSIP (Modular Open Source Identity Platform) | MOSIP Connect 2026 | OpenBQ

Comments